Google Glass vs Epson Glass Quant AR Research

2015 - 2016 | Glass AR | Google Glass | Quant UX Research | ACM CHI 2015

Preface

One of the most benefits that AR technology bring to us is to make the complex use cases in ordinal process. Exciting developments in eye-wearable technology and its potential industrial applications warrant a thorough understanding of its advantages and drawbacks through empirical evidence.

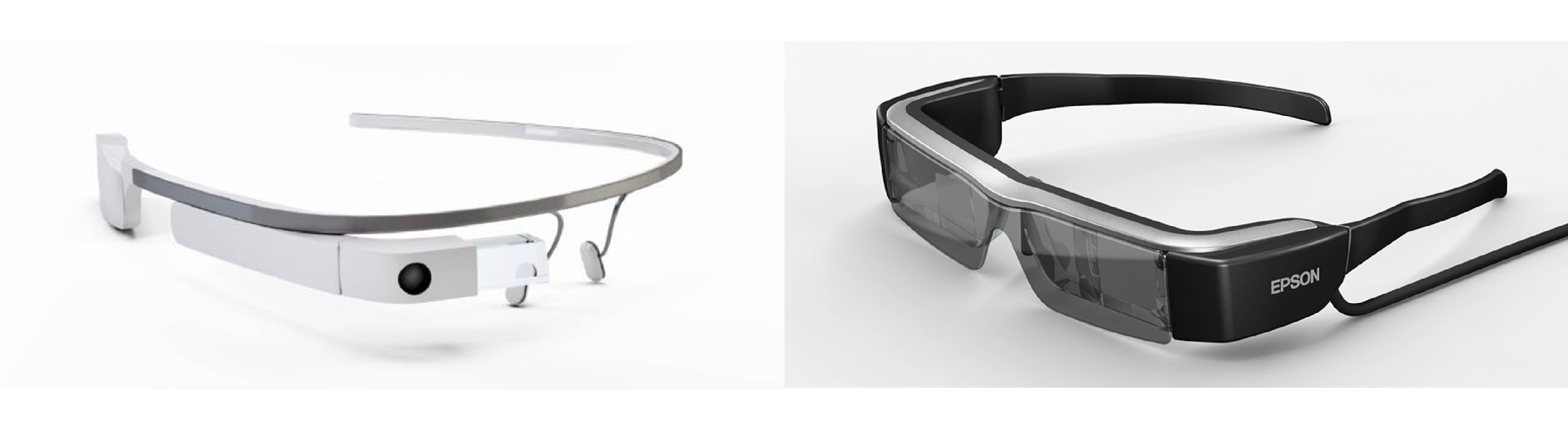

In Siemens, we conducts an experiment user research for testing two different AR Glasses: Google Glass and Epson Moverio comparing with traditional paper media and tablet. The research paper was published in ACM CHI 2015.

The AR Glass Design Logic: from Complex to Simple

We found a significant effect of display position: the peripheral eye-wearable display resulted in longer completion time than the central display; but no effect for hands-free operation. The technology effects were also modulated by different Tasks and Action types. We discuss the human factors implications for designing more effective eye-wearable technology, including display position, issues of monocular display, and how the physical proximity of the technology affects users’ reliance level.

Google Glass vs Epson Moverio BT 200

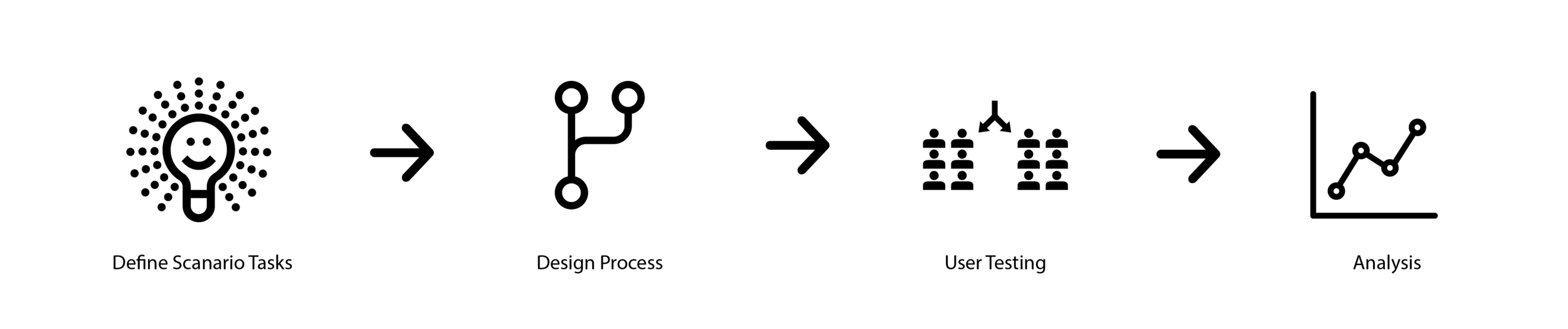

Research Process

Introduction

Recent advancement of eye-wearable technology, such as Google Glass, has generated a lot of excitement regarding its potential applications, especially in industrial settings. However, as the end-user reviews for such technologies have been mixed, we need to gain an understanding of its fundamental characteristics and their impact on human performance through empirical evidence.

Research Process

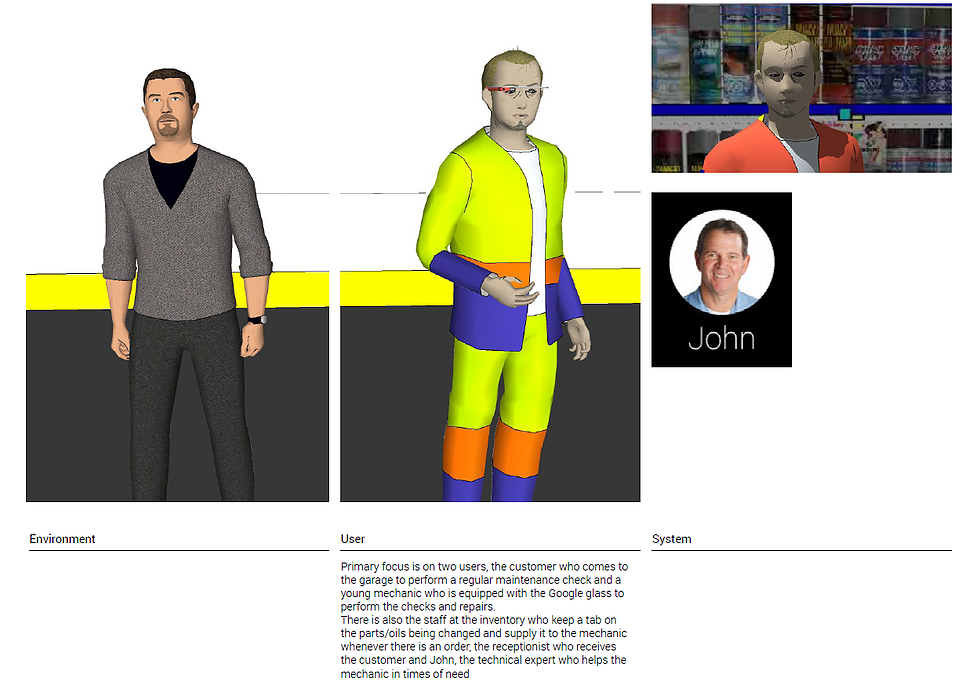

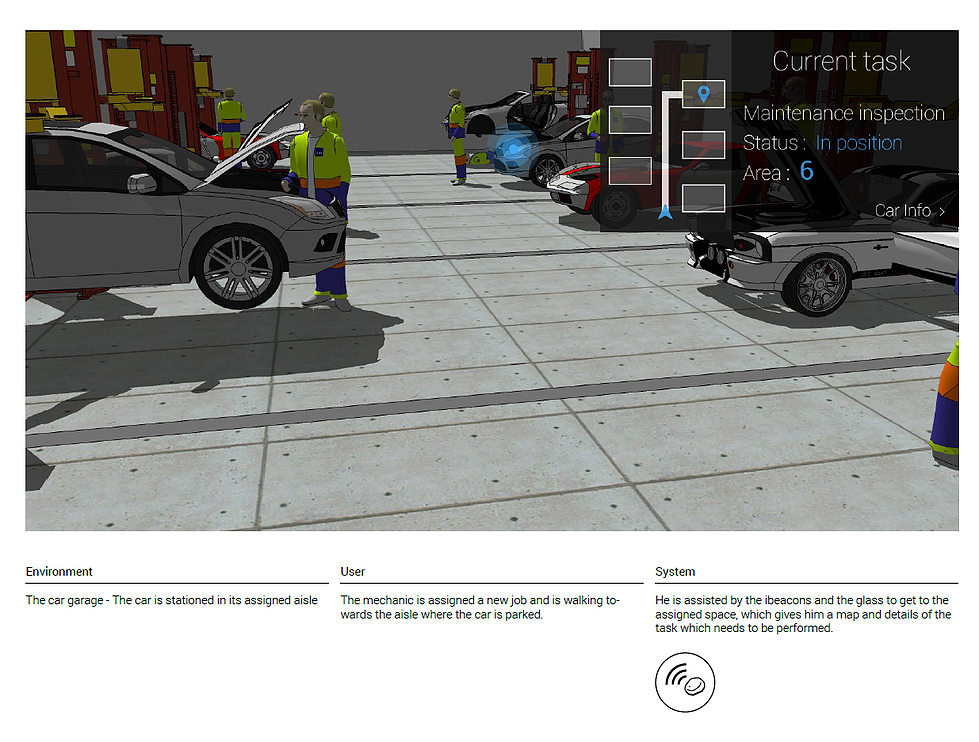

Persona and Scenario

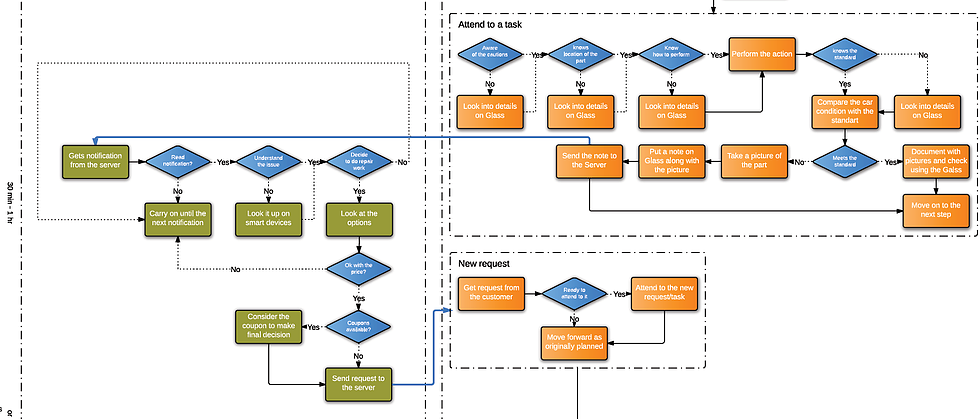

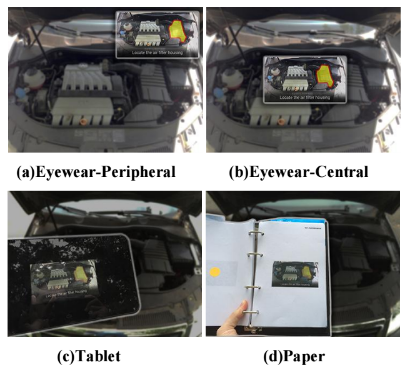

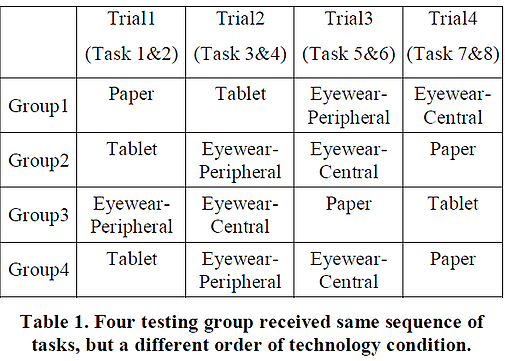

Our goal is to investigate how different eye-wearable technology attributes affect user performance on realistic maintenance tasks. We studied three independent variables: Technology, Task, and Action Type. We conducted a experiment involving a varied set of car maintenance tasks and four experimental conditions that exhibit eye-wearable and non eye-wearable factors. In all four conditions, participants were provided step-by-step instructions that were identical for the same task.

Task Workflow for User Testing

Our goal is to investigate how different eye-wearable technology attributes affect user performance on realistic maintenance tasks. We studied three independent variables: Technology, Task, and Action Type. We conducted a experiment involving a varied set of car maintenance tasks and four experimental conditions that exhibit eye-wearable and non eye-wearable factors. In all four conditions, participants were provided step-by-step instructions that were identical for the same task.

Defined Use Case Partially

Experimental Research and Data Analysis

Test Device

Google Glass:

Epson Moverio BT-200

Tablet

Paper

Participants and Procedure

We recruited 12 participants (3 female, 9 male), aged 20 to 27, from Siemens Corporate Technology at Princeton, New Jersey. All participants had an average of 4 years driving experience. They reported to have limited experience with car maintenance. All participants had normal or corrected to-normal vision except one with red-green color blind.

We tested all participants for eye-dominance using the Miles and Porta test [seven were right-eye dominant and five were lefteye dominant].

Data Analysis and Results

Final Data Collection

Overall, all the participants completed all the tasks quite accurately: ten had no errors, and the other two committed only one error in a single task step. Therefore, these data were not included in the statistical analysis. A 3-way ANOVA (Technology x Task x Action Type) applied to the completion time showed significant main effects for Technology (F(3, 582) = 3.404, p=.017, h2= .017, power (1– β)= .768) , Task (F(7, 582) = 8.862, p<.001, h2= .096, (1– β)= 1.000), and Action Type (F(3, 582) = 38.140, p<.001, h2= .164, (1– β)= 1.000).

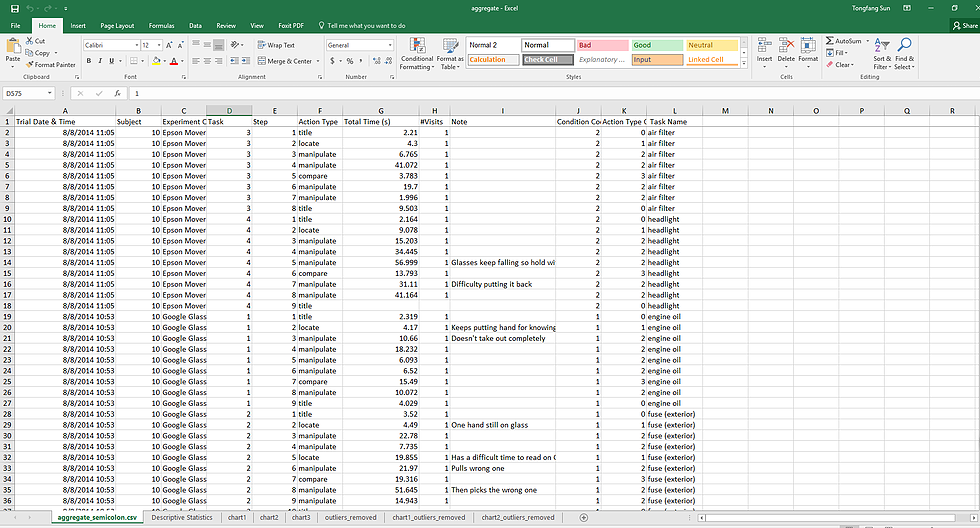

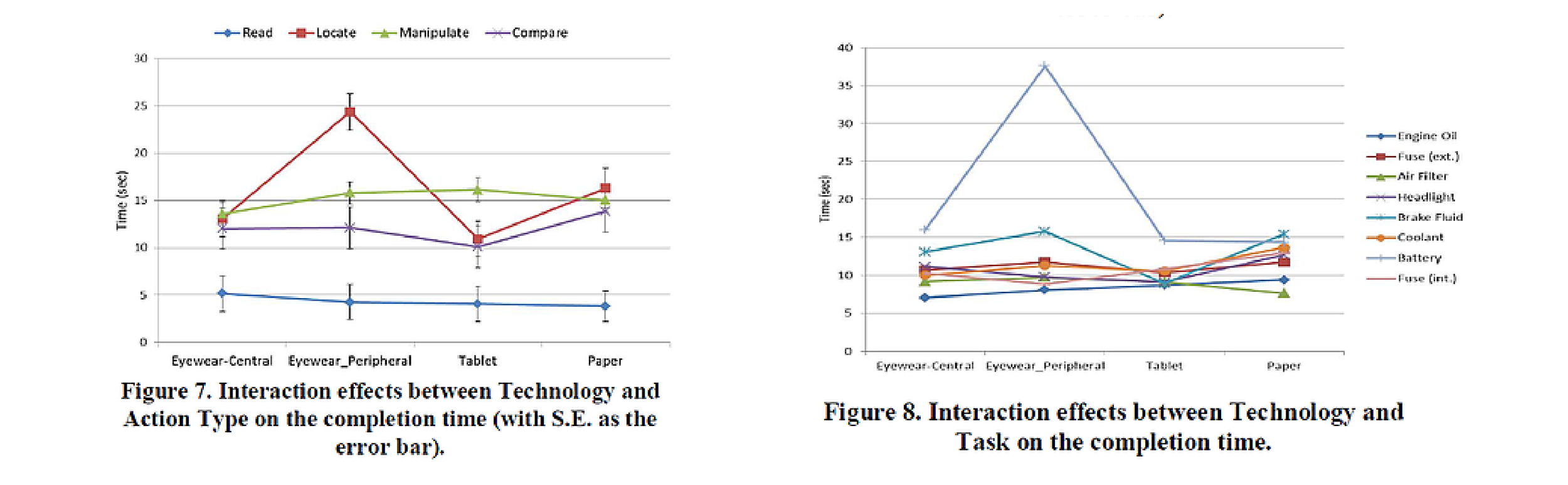

There were also significant two-way interaction effects for Technology x Task (F(21, 582) = 2.327, p=.001, h2= .077, power (1– β)=.997 ), and Technology x Action Type (F(9, 582) = 2.808, p=.003, h2= .042, power (1– β)= .961), and also the three-way interaction effects Technology x Task x Action Type (F(63, 582) = 2.469, p<.001, h2= .211, power (1– β)=1.000).

Discussion

inding 1: Eyewear-Central was faster than Eyewear-Peripheral

Overall, Eyewear-Central yielded a shorter completion time than Eyewear-Peripheral. This can be accounted for by a shorter time to access or process the information in Eyewear-Central compared to Eyewear-Peripheral. Different types of eye movement are involved in accessing

information in these two display conditions.

Finding 2: No difference in completion time between Eyewear and Non-Eyewear conditions

To our surprise, we did not detect a significant difference between Eyewear technologies (Eyewear-Peripheral + Eyewear-Central) and Non-Eyewear Technologies (Tablet + Paper). We expected the hands-free characteristic as well as a theoretically shorter information access in Eyewear conditions to have an impact on the completion time. Further analysis of participant behaviors revealed why those factors had a small effect size and were evened out by other factors.

Finding 3: Eyewear-Peripheral was preferred to all the other technologies

User preference ratings showed that Eyewear-Peripheral was most preferred (6 participants most preferred Eyewear-Peripheral, 3 for Eyewear-Central, 2 for Tablet, and 1 for Paper). Their justifications included “it is hands-free”, “it is light and comfortable”, “it is unobstructive and nondistracting”, and “it is convenient”. The biggest complaint was that they “didn’t like to look in the corner” and “didn’t like to change their vision from up to down because it is awkward and hard to adapt”. Comments for Eyewear-central include “heavy”, “uncomfortable”, “don't like having the information in front of me always”. Comments for both Tablet and Paper included “see things clearly”, “no need to adapt vision”, “easy to carry around”, “worried about dropping it now and then”, “could get dirty”, “everyone knows how to use”, but “annoying to turn the pages(for Paper).

Videos & Future Work

Prototype Demo